When the COVID-19 crisis forced all Wharton courses and classes online in March, many faculty members were caught off-guard and scrambled to adapt their in-person lectures and labs for distance learning. Naturally, the Learning Lab saw an opportunity to help. Our team immediately got to work identifying universally applicable business simulations that could be set-up and delivered remotely – and the first game to serve as a virtual guinea pig in this regard was OPEQ.

When the COVID-19 crisis forced all Wharton courses and classes online in March, many faculty members were caught off-guard and scrambled to adapt their in-person lectures and labs for distance learning. Naturally, the Learning Lab saw an opportunity to help. Our team immediately got to work identifying universally applicable business simulations that could be set-up and delivered remotely – and the first game to serve as a virtual guinea pig in this regard was OPEQ.

IT Director Joe Lee points out that OPEQ, in particular, is a good fit for remote delivery – though he admits the setup process was considerably more difficult than it would be in normal times: “Faculty need to be coached to manage their expectations regarding the effectiveness of the exercise, especially for faculty who have run the same exercise in-class previously.”

Erica Boothby, a Wharton lecturer and postdoctoral fellow, was on of the School’s first instructor’s to embrace remote delivery of what is normally a popular in-class simulation for her spring course on negotiations. “Students were very engaged,” she said of the OPEQ experience, noting that the “team” element of the game seemed to inject a boost of energy into her socially isolated students.

It quickly became apparent how much her class enjoyed it during the debrief, Boothby added. “They really felt the power of the social dilemma firsthand – how can you maximize the profits of your team but also of your world, which includes your competitors? OPEQ really set the stage nicely for our conversation about defection and cooperation, and trust in negotiation.”

And speaking of setting the stage, Joe also emphasizes how important it is to spend one-on-one time or hold training sessions with faculty members so they’re comfortable with the technology tools available to them to facilitate the exercise.

“Go over the ‘flow and show’ of the experience,” he adds, referencing the end-to-end explanation of what faculty and students can expect before, during, and after the remote exercise. And, lastly, “Do everything you can to test and vet the process before getting in the classroom,” he adds, noting that he and the LL’s head of operations, Heather Meiers, met with each faculty member for 30 minutes before their class started to go through all the major steps in the upcoming experience, ensuring they were as comfortable as possible.

For Boothby, this made a huge difference in her level of comfort conducting OPEQ from home. “I couldn’t have asked for better support,” she said. “Heather walked me through the procedures thoroughly well in advance, she sent an email to my students informing them how to access the game and providing any information they needed in order to log into the correct world etc. And Joe was available on Slack to field any questions that came up during the simulation itself, so I felt very well supported.”

As a result, Boothby says she’d recommend remote use of OPEQ to other faculty members “without hesitation!” The main selling point, in her words, is that ‘the process is fully streamlined, which really enables the students – and instructor – to focus their full attention on the experience of the negotiation and the content of the lesson.”

Other tips and tricks the Learning Lab recommends for remote delivery:

- Make sure you have a way for students to get help during the exercise should they experience issues. We gave students a help e-mail address they could contact if they have technical issues and we dropped into each virtual meeting room personally to check up on them during the exercise.

- Make sure the exercise can run with variable attendance. OPEQ can run if there is at least 1 person on each team; typically, teams are comprised of 4-5 students to ensure that the experience can still run in the event of some absences.

- Build in some more time for the exercise if you are used to running it in-class. This allows time for the remediation of technical issues/questions.

- Determine how you’ll communicate with faculty or other facilitators during the exercise. OPEQ takes place in breakout rooms and the faculty typically dip into and out of each room throughout the simulation. Heather had the idea of using Slack as a way us to communicate with them while the exercise is being played – that way, no matter which “room” people were in, we were only a few keystrokes away if they needed assistance.

Things to consider when deciding if an exercise is a good fit for remote delivery and/or if it can be modified to meet your usage needs:

- EVALUATING THE SIMULATION

- What does this simulation require to work in class?

- think about teams, breakout rooms, faculty intervention, student conversations between teams, certain number of students to play, etc.

- What does this simulation require to work in class?

-

- Will this simulation work remotely?

- e.g., Is it web-based? If not, do you have virtual machines or workspaces you can deploy to students and, if so, what type of setup does that entail on both the faculty and student sides?

- What does the setup process entail? If it requires breakout rooms, how many do you need and how can they be created? Is the faculty member comfortable running a simulation remotely?

- Can you modify aspects of the experience to make the simulation work remotely?

- Things to consider: Can you replace in-person interactions with digital ones? (For instance, with OPEQ we removed a “face-to-face” round and replaced it with chat.)

- Can the simulation work asynchronously? Is it possible to give students a window of time to complete the exercise?

- Will this simulation work remotely?

-

- What is your normal level of support during an in-class run, and does that need to increase or decrease for a remote run?

- i.e., Can you be simply “on call” for tech questions, or do you have to sit in a virtual lecture room to monitor questions for the duration of the simulation? (For instance, with in-person OPEQ we are usually in the class and wandering the halls; for remote delivery, we were there for the first 10 minutes of the virtual class for the intro, then monitored the game remotely in an “on call” style while communicating with the professors over Slack and answering questions from students via email – a much more hands-off approach, which may be optimal for you.)

- What is your normal level of support during an in-class run, and does that need to increase or decrease for a remote run?

Given that the Learning Lab had never run OPEQ remotely before, the exercise was a huge success and revealed a whole new (virtual) pathway for delivering Wharton’s interactive simulations while we’re all off-campus. This should give everyone hope that we can still provide students and faculty with many of the uniquely effective digital exercises and teaching tools that bring their course material to life.

Here are some other popular sims the Learning Lab can deliver remotely:

and more, including 3rd party simulations!

Surfing along the crest of this radical wave of new technologies is augmented reality (AR). Sometimes referred to as “blended reality,” it allows users to experience the real world, printed text, or even a classroom lesson with an overlay of additional 3D data content, amplifying access to instant information and bringing it to life; in turn, bringing thrilling new opportunities for experiential education.

Surfing along the crest of this radical wave of new technologies is augmented reality (AR). Sometimes referred to as “blended reality,” it allows users to experience the real world, printed text, or even a classroom lesson with an overlay of additional 3D data content, amplifying access to instant information and bringing it to life; in turn, bringing thrilling new opportunities for experiential education. Many students learn best when they’re able to access visual rather than verbal information. Whereas classroom materials that integrate visuals might include presentation slides, textbooks, handouts and the like, AR takes visuals to the next level.

Many students learn best when they’re able to access visual rather than verbal information. Whereas classroom materials that integrate visuals might include presentation slides, textbooks, handouts and the like, AR takes visuals to the next level.

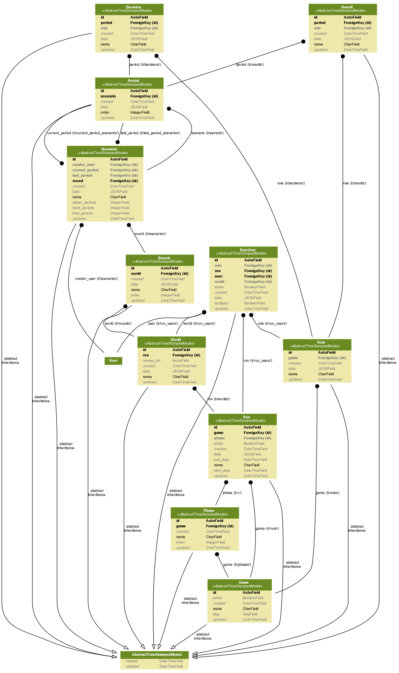

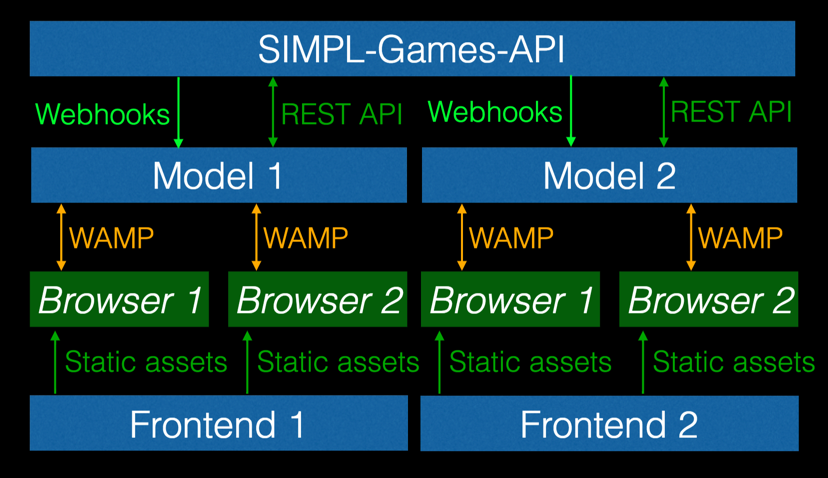

Code starts at the model-level. So before we wrote one line of SIMPL (the Learning Lab’s new simulation framework), we needed to figure out what, exactly, our data model would look like. Considering the ambitious goal of the project — a simulation framework that could support all of our current games as well as games yet unknown — we had to be very careful to create one that would be flexible enough to adjust to our growing needs, but not so complex as to make development overly challenging.

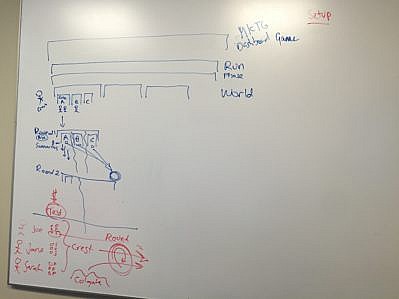

Code starts at the model-level. So before we wrote one line of SIMPL (the Learning Lab’s new simulation framework), we needed to figure out what, exactly, our data model would look like. Considering the ambitious goal of the project — a simulation framework that could support all of our current games as well as games yet unknown — we had to be very careful to create one that would be flexible enough to adjust to our growing needs, but not so complex as to make development overly challenging.  The results of one of our white-boarding sessions.

The results of one of our white-boarding sessions.